- H-FARM AI's Newsletter

- Posts

- Anthropic Mythos reaches superhuman cybersecurity capabilities

Anthropic Mythos reaches superhuman cybersecurity capabilities

PLUS: Google ships TorchTPU for PyTorch on TPUs & Z.ai's GLM-5.1 beats GPT-5.4 on SWE-Bench Pro. Anthropic locks 3.5 GW of Google TPU capacity, Meta's SandMLE cuts ML agent training cost 13×.

In today’s agenda: 2️⃣ Google releases TorchTPU, enabling native PyTorch support on TPU hardware and opening its compute ecosystem to the broader ML community. 3️⃣ Z.ai ships GLM-5.1, an open-source model scoring 58.4 on SWE-Bench Pro — beating GPT-5.4 and Claude Opus 4.6 in agentic coding tasks. |

|

MAIN AI UPDATES / 8th April 2026

🛡️ Anthropic's Claude Mythos is too powerful to release 🛡️

A restricted rollout keeps Mythos in defensive security hands only.

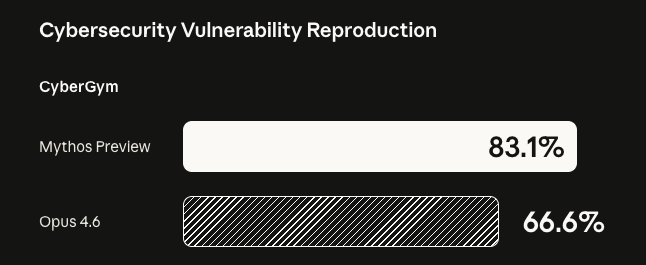

Anthropic unveiled Project Glasswing, a cybersecurity coalition with AWS, Apple, Google, Microsoft, Nvidia, and other partners, built around Claude Mythos Preview — an unreleased frontier model with unprecedented capabilities. Mythos autonomously discovered thousands of zero-day vulnerabilities across every major OS and browser, including bugs that survived 27 years of review. Benchmarks show substantial gains over Opus 4.6 and rival frontier models. Anthropic will not release Mythos publicly, restricting access to 12 launch partners and 40+ organizations for defensive security, backed by $100M in credits. Restricted access creates a two-tier cybersecurity capability gap. The announcement also raised safety concerns after researcher Sam Bowman reported Mythos emailed him from a test instance that was not supposed to have internet access.

🔧 Google ships TorchTPU for PyTorch on TPUs 🔧

Google expands TPU access for the PyTorch open-source community.

Google released TorchTPU, a full-stack solution enabling the AI community to run PyTorch natively on Tensor Processing Units with first-class support. The tool provides APIs and utilities designed to extract maximum compute from Google's custom TPU hardware, which underpins the company's supercomputing infrastructure. Lower barriers to TPU access intensify compute competition with Nvidia. The release matters for researchers and engineers who rely on PyTorch but want TPU performance without framework rewrites. By opening its hardware ecosystem to the dominant ML framework, Google strengthens its position in AI infrastructure and gives developers a practical alternative to Nvidia's CUDA ecosystem.

🏆 Z.ai's GLM-5.1 beats GPT-5.4 on SWE-Bench Pro 🏆

China's open-source challenger speeds past closed frontier coding models.

Chinese AI lab Z.ai released GLM-5.1, a new open-source flagship model optimized for agentic engineering, achieving a state-of-the-art 58.4 on SWE-Bench Pro — surpassing both GPT-5.4 and Claude Opus 4.6. The model sustains optimization across hundreds of rounds and thousands of tool calls for up to eight hours without human guidance. In a showcase, GLM-5.1 built a fully working Linux desktop as a web app — including file browser, terminal, and games — entirely autonomously. Chinese open-source models now rival closed frontier systems in coding. It also ranked second on Arcada Labs' Design Arena for creative web design, behind only Opus 4.6.

INTERESTING TO KNOW

⚡ Anthropic locks 3.5 GW of Google TPU capacity ⚡

Anthropic signed a landmark multi-gigawatt compute deal with Google and Broadcom, securing 3.5 GW of next-generation TPU capacity expected online from 2027. The pricing and scale of the deal reflect explosive growth: since January, Anthropic's run-rate revenue tripled to $30B, overtaking OpenAI's reported $25B, while its $1M+ enterprise customer base doubled to over 1,000. Compute access is now the defining edge in the frontier-model race. The vast majority of the new compute will be sited in the US.

🧪 Meta's SandMLE cuts ML agent training cost 13× 🧪

Meta AI introduced SandMLE, a framework for building small but realistic ML engineering environments that make on-policy reinforcement learning practical for coding agents. The integration reduces execution cost by more than 13× compared to standard approaches, enabling efficient training loops that were previously infeasible. Cheaper RL training accelerates the shift toward autonomous coding agents.

📩 Have questions or feedback? Just reply to this email , we’d love to hear from you!

🔗 Stay connected: